Artificial Intelligence Risk Management Framework

The Artificial Intelligence Risk Management Framework (AI RMF 1.0) is a framework that helps organizations meet risk management and compliance requirements when using artificial intelligence (AI).

Introduction

The Artificial Intelligence Risk Management Framework (AI RMF 1.0) helps organizations manage risk and meet compliance requirements when deploying artificial intelligence (AI). Developed by the U.S. National Institute of Standards and Technology (NIST), it provides a structured method to identify, assess, and manage the risks associated with AI systems. The framework includes guidelines for developing, implementing, and monitoring AI systems, along with tools for ensuring compliance. The following sections outline the key concepts covered by the framework:

AI Risks and Trustworthiness

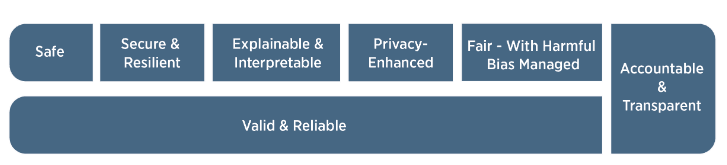

For AI systems to be considered trustworthy, they typically must satisfy a range of criteria that matter to the stakeholders involved. Approaches that improve AI trustworthiness can simultaneously reduce AI-related risks. The characteristics of trustworthy AI systems include: validity and reliability, safety, resilience, accountability and transparency, explainability and interpretability, privacy, and fairness. Building trustworthy AI requires carefully balancing these characteristics within the specific context of each AI system's deployment. While all characteristics are socio-technical system attributes, accountability and transparency also relate to the processes and activities within an AI system and its external environment. Neglecting these attributes can increase both the likelihood and magnitude of negative consequences.

Validity and reliability are necessary conditions for trustworthiness and form the basis for all other characteristics. Accountability and transparency are shown as a vertical box because they relate to every other characteristic.

Validity and Reliability

Validation is the confirmation, through objective evidence, that the requirements for a particular intended use have been met. AI systems that are inaccurate, unreliable, or poorly generalized to data and settings beyond their training increase AI-related risks and reduce trustworthiness. Reliability is defined in the same standard as the ability of a system to function as required, without failure, for a specified period under specified conditions. It serves as a goal for the overall correctness of an AI system's operation under expected conditions and across its entire lifespan.

Safety

AI systems should not create conditions that endanger human life, health, property, or the environment. Safe operation of AI systems is enhanced by:

- Responsible design, development, and deployment practices

- Clear information for deployers on responsible system usage

- Responsible decision-making by deployers and end users

- Documentation of risks based on empirical evidence from incidents

Different types of safety risks may require tailored risk management approaches based on context and severity. Risks that carry the potential for serious injury or death demand the highest prioritization and the most thorough risk management process.

Resilience

AI systems, along with the ecosystems in which they operate, are considered resilient if they can withstand unexpected adverse events or changes in their environment. This means they maintain their functions and structure despite internal and external disruptions, or degrade safely and gracefully when necessary. Common security concerns in this context include adversarial examples, data poisoning, and the exfiltration of models, training data, or other intellectual property through AI system endpoints. AI systems that maintain confidentiality, integrity, and availability through safeguards preventing unauthorized access and use can be considered secure.

Accountability and Transparency

Trustworthy AI is built on accountability, and accountability requires transparency. Transparency reflects the extent to which information about an AI system and its outputs is available to those who interact with it -- whether they are aware of the interaction or not. Meaningful transparency means providing appropriate information aligned with the AI's developmental stage and the roles, knowledge, and expertise of the stakeholders involved. By fostering deeper understanding, transparency strengthens confidence in the AI system. This characteristic spans everything from design decisions and training data to model architecture, intended use cases, and the question of how, when, and by whom deployment or post-deployment decisions were made. Transparency is often a prerequisite for taking effective corrective action when an AI system produces incorrect or otherwise harmful outputs.

Explainability and Interpretability

Explainability refers to how the mechanisms underlying an AI system's operation are described, while interpretability concerns the meaning of system outputs in the context of their intended purpose. Together, they enable operators, monitors, and users to gain deeper insights into a system's functionality and trustworthiness. The underlying assumption is that negative risks often stem from an inability to adequately understand or contextualize system outputs. Explainable and interpretable AI systems provide information that helps end users understand the purposes and potential effects of an AI system. Risks arising from a lack of explainability can be managed by describing AI system operations in ways that account for individual differences such as user role, knowledge, and skill level. Explainable systems are also easier to debug and monitor, and they lend themselves to more thorough documentation, auditing, and governance.

Privacy

Privacy generally refers to the norms and practices that help protect human autonomy, identity, and dignity. These typically address freedom from intrusion, restrictions on observation, and the ability of individuals to consent to or refuse the disclosure of aspects of their identity (e.g., body, data, reputation). Privacy values such as anonymity, confidentiality, and control should guide AI system design, development, and deployment decisions. Privacy-related risks can affect security, bias mitigation, and transparency, requiring careful trade-offs against these other characteristics. As with safety, certain technical features of an AI system can either strengthen or weaken privacy. AI systems may also introduce new privacy risks by enabling the identification of individuals or the inference of previously inaccessible information about them.

Fairness

Fairness in AI encompasses issues of equity and justice, addressing problems such as harmful bias and discrimination. Fairness standards can be complex and difficult to define, as perceptions of fairness vary across cultures and may differ depending on the application. An organization's risk management benefits from recognizing and accounting for these differences. Importantly, systems in which harmful biases have been mitigated are not necessarily fair. For example, a system whose predictions are balanced across demographic groups may still be inaccessible to people with disabilities, impacted by the digital divide, or may even exacerbate existing disparities and systemic biases.

Conclusion

The Artificial Intelligence Risk Management Framework (AI RMF 1.0) helps organizations manage risk and meet compliance requirements when using artificial intelligence. It provides a structured approach to identifying, assessing, and managing the risks associated with AI system implementation. The framework includes guidelines for developing, implementing, and monitoring AI systems, along with tools for ensuring compliance. For an AI system to be considered trustworthy, it must satisfy key criteria: validity and reliability, safety, resilience, accountability and transparency, explainability and interpretability, privacy, and fairness. These characteristics form the foundation for establishing trustworthiness in AI systems.